* Add Robosuite parameters for all env types + initialize env flow * Init flow done * Rest of Environment API complete for RobosuiteEnvironment * RobosuiteEnvironment changes * Observation stacking filter * Add proper frame_skip in addition to control_freq * Hardcode Coach rendering to 'frontview' camera * Robosuite_Lift_DDPG preset + Robosuite env updates * Move observation stacking filter from env to preset * Pre-process observation - concatenate depth map (if exists) to image and object state (if exists) to robot state * Preset parameters based on Surreal DDPG parameters, taken from: https://github.com/SurrealAI/surreal/blob/master/surreal/main/ddpg_configs.py * RobosuiteEnvironment fixes - working now with PyGame rendering * Preset minor modifications * ObservationStackingFilter - option to concat non-vector observations * Consider frame skip when setting horizon in robosuite env * Robosuite lift preset - update heatup length and training interval * Robosuite env - change control_freq to 10 to match Surreal usage * Robosuite clipped PPO preset * Distribute multiple workers (-n #) over multiple GPUs * Clipped PPO memory optimization from @shadiendrawis * Fixes to evaluation only workers * RoboSuite_ClippedPPO: Update training interval * Undo last commit (update training interval) * Fix "doube-negative" if conditions * multi-agent single-trainer clipped ppo training with cartpole * cleanups (not done yet) + ~tuned hyper-params for mast * Switch to Robosuite v1 APIs * Change presets to IK controller * more cleanups + enabling evaluation worker + better logging * RoboSuite_Lift_ClippedPPO updates * Fix major bug in obs normalization filter setup * Reduce coupling between Robosuite API and Coach environment * Now only non task-specific parameters are explicitly defined in Coach * Removed a bunch of enums of Robosuite elements, using simple strings instead * With this change new environments/robots/controllers in Robosuite can be used immediately in Coach * MAST: better logging of actor-trainer interaction + bug fixes + performance improvements. Still missing: fixed pubsub for obs normalization running stats + logging for trainer signals * lstm support for ppo * setting JOINT VELOCITY action space by default + fix for EveryNEpisodes video dump filter + new TaskIDDumpFilter + allowing or between video dump filters * Separate Robosuite clipped PPO preset for the non-MAST case * Add flatten layer to architectures and use it in Robosuite presets This is required for embedders that mix conv and dense TODO: Add MXNet implementation * publishing running_stats together with the published policy + hyper-param for when to publish a policy + cleanups * bug-fix for memory leak in MAST * Bugfix: Return value in TF BatchnormActivationDropout.to_tf_instance * Explicit activations in embedder scheme so there's no ReLU after flatten * Add clipped PPO heads with configurable dense layers at the beginning * This is a workaround needed to mimic Surreal-PPO, where the CNN and LSTM are shared between actor and critic but the FC layers are not shared * Added a "SchemeBuilder" class, currently only used for the new heads but we can change Middleware and Embedder implementations to use it as well * Video dump setting fix in basic preset * logging screen output to file * coach to start the redis-server for a MAST run * trainer drops off-policy data + old policy in ClippedPPO updates only after policy was published + logging free memory stats + actors check for a new policy only at the beginning of a new episode + fixed a bug where the trainer was logging "Training Reward = 0", causing dashboard to incorrectly display the signal * Add missing set_internal_state function in TFSharedRunningStats * Robosuite preset - use SingleLevelSelect instead of hard-coded level * policy ID published directly on Redis * Small fix when writing to log file * Major bugfix in Robosuite presets - pass dense sizes to heads * RoboSuite_Lift_ClippedPPO hyper-params update * add horizon and value bootstrap to GAE calculation, fix A3C with LSTM * adam hyper-params from mujoco * updated MAST preset with IK_POSE_POS controller * configurable initialization for policy stdev + custom extra noise per actor + logging of policy stdev to dashboard * values loss weighting of 0.5 * minor fixes + presets * bug-fix for MAST where the old policy in the trainer had kept updating every training iter while it should only update after every policy publish * bug-fix: reset_internal_state was not called by the trainer * bug-fixes in the lstm flow + some hyper-param adjustments for CartPole_ClippedPPO_LSTM -> training and sometimes reaches 200 * adding back the horizon hyper-param - a messy commit * another bug-fix missing from prev commit * set control_freq=2 to match action_scale 0.125 * ClippedPPO with MAST cleanups and some preps for TD3 with MAST * TD3 presets. RoboSuite_Lift_TD3 seems to work well with multi-process runs (-n 8) * setting termination on collision to be on by default * bug-fix following prev-prev commit * initial cube exploration environment with TD3 commit * bug fix + minor refactoring * several parameter changes and RND debugging * Robosuite Gym wrapper + Rename TD3_Random* -> Random* * algorithm update * Add RoboSuite v1 env + presets (to eventually replace non-v1 ones) * Remove grasping presets, keep only V1 exp. presets (w/o V1 tag) * Keep just robosuite V1 env as the 'robosuite_environment' module * Exclude Robosuite and MAST presets from integration tests * Exclude LSTM and MAST presets from golden tests * Fix mistakenly removed import * Revert debug changes in ReaderWriterLock * Try another way to exclude LSTM/MAST golden tests * Remove debug prints * Remove PreDense heads, unused in the end * Missed removing an instance of PreDense head * Remove MAST, not required for this PR * Undo unused concat option in ObservationStackingFilter * Remove LSTM updates, not required in this PR * Update README.md * code changes for the exploration flow to work with robosuite master branch * code cleanup + documentation * jupyter tutorial for the goal-based exploration + scatter plot * typo fix * Update README.md * seprate parameter for the obs-goal observation + small fixes * code clarity fixes * adjustment in tutorial 5 * Update tutorial * Update tutorial Co-authored-by: Guy Jacob <guy.jacob@intel.com> Co-authored-by: Gal Leibovich <gal.leibovich@intel.com> Co-authored-by: shadi.endrawis <sendrawi@aipg-ra-skx-03.ra.intel.com>

17 KiB

Coach

Coach is a python reinforcement learning framework containing implementation of many state-of-the-art algorithms.

It exposes a set of easy-to-use APIs for experimenting with new RL algorithms, and allows simple integration of new environments to solve. Basic RL components (algorithms, environments, neural network architectures, exploration policies, ...) are well decoupled, so that extending and reusing existing components is fairly painless.

Training an agent to solve an environment is as easy as running:

coach -p CartPole_DQN -r

- Release 0.8.0 (initial release)

- Release 0.9.0

- Release 0.10.0

- Release 0.11.0

- Release 0.12.0

- Release 1.0.0 (current release)

Table of Contents

- Benchmarks

- Installation

- Getting Started

- Supported Environments

- Supported Algorithms

- Citation

- Contact

- Disclaimer

Benchmarks

One of the main challenges when building a research project, or a solution based on a published algorithm, is getting a concrete and reliable baseline that reproduces the algorithm's results, as reported by its authors. To address this problem, we are releasing a set of benchmarks that shows Coach reliably reproduces many state of the art algorithm results.

Installation

Note: Coach has only been tested on Ubuntu 16.04 LTS, and with Python 3.5.

For some information on installing on Ubuntu 17.10 with Python 3.6.3, please refer to the following issue: https://github.com/IntelLabs/coach/issues/54

In order to install coach, there are a few prerequisites required. This will setup all the basics needed to get the user going with running Coach on top of OpenAI Gym environments:

# General

sudo -E apt-get install python3-pip cmake zlib1g-dev python3-tk python-opencv -y

# Boost libraries

sudo -E apt-get install libboost-all-dev -y

# Scipy requirements

sudo -E apt-get install libblas-dev liblapack-dev libatlas-base-dev gfortran -y

# PyGame

sudo -E apt-get install libsdl-dev libsdl-image1.2-dev libsdl-mixer1.2-dev libsdl-ttf2.0-dev

libsmpeg-dev libportmidi-dev libavformat-dev libswscale-dev -y

# Dashboard

sudo -E apt-get install dpkg-dev build-essential python3.5-dev libjpeg-dev libtiff-dev libsdl1.2-dev libnotify-dev

freeglut3 freeglut3-dev libsm-dev libgtk2.0-dev libgtk-3-dev libwebkitgtk-dev libgtk-3-dev libwebkitgtk-3.0-dev

libgstreamer-plugins-base1.0-dev -y

# Gym

sudo -E apt-get install libav-tools libsdl2-dev swig cmake -y

We recommend installing coach in a virtualenv:

sudo -E pip3 install virtualenv

virtualenv -p python3 coach_env

. coach_env/bin/activate

Finally, install coach using pip:

pip3 install rl_coach

Or alternatively, for a development environment, install coach from the cloned repository:

cd coach

pip3 install -e .

If a GPU is present, Coach's pip package will install tensorflow-gpu, by default. If a GPU is not present, an Intel-Optimized TensorFlow, will be installed.

In addition to OpenAI Gym, several other environments were tested and are supported. Please follow the instructions in the Supported Environments section below in order to install more environments.

Getting Started

Tutorials and Documentation

Basic Usage

Running Coach

To allow reproducing results in Coach, we defined a mechanism called preset.

There are several available presets under the presets directory.

To list all the available presets use the -l flag.

To run a preset, use:

coach -r -p <preset_name>

For example:

-

CartPole environment using Policy Gradients (PG):

coach -r -p CartPole_PG -

Basic level of Doom using Dueling network and Double DQN (DDQN) algorithm:

coach -r -p Doom_Basic_Dueling_DDQN

Some presets apply to a group of environment levels, like the entire Atari or Mujoco suites for example.

To use these presets, the requeseted level should be defined using the -lvl flag.

For example:

-

Pong using the Neural Episodic Control (NEC) algorithm:

coach -r -p Atari_NEC -lvl pong

There are several types of agents that can benefit from running them in a distributed fashion with multiple workers in parallel. Each worker interacts with its own copy of the environment but updates a shared network, which improves the data collection speed and the stability of the learning process.

To specify the number of workers to run, use the -n flag.

For example:

-

Breakout using Asynchronous Advantage Actor-Critic (A3C) with 8 workers:

coach -r -p Atari_A3C -lvl breakout -n 8

It is easy to create new presets for different levels or environments by following the same pattern as in presets.py

More usage examples can be found here.

Running Coach Dashboard (Visualization)

Training an agent to solve an environment can be tricky, at times.

In order to debug the training process, Coach outputs several signals, per trained algorithm, in order to track algorithmic performance.

While Coach trains an agent, a csv file containing the relevant training signals will be saved to the 'experiments' directory. Coach's dashboard can then be used to dynamically visualize the training signals, and track algorithmic behavior.

To use it, run:

dashboard

Distributed Multi-Node Coach

As of release 0.11.0, Coach supports horizontal scaling for training RL agents on multiple nodes. In release 0.11.0 this was tested on the ClippedPPO and DQN agents. For usage instructions please refer to the documentation here.

Batch Reinforcement Learning

Training and evaluating an agent from a dataset of experience, where no simulator is available, is supported in Coach. There are example presets and a tutorial.

Supported Environments

-

OpenAI Gym:

Installed by default by Coach's installer (see note on MuJoCo version below).

-

ViZDoom:

Follow the instructions described in the ViZDoom repository -

https://github.com/mwydmuch/ViZDoom

Additionally, Coach assumes that the environment variable VIZDOOM_ROOT points to the ViZDoom installation directory.

-

Roboschool:

Follow the instructions described in the roboschool repository -

-

GymExtensions:

Follow the instructions described in the GymExtensions repository -

https://github.com/Breakend/gym-extensions

Additionally, add the installation directory to the PYTHONPATH environment variable.

-

PyBullet:

Follow the instructions described in the Quick Start Guide (basically just - 'pip install pybullet')

-

CARLA:

Download release 0.8.4 from the CARLA repository -

https://github.com/carla-simulator/carla/releases

Install the python client and dependencies from the release tarball:

pip3 install -r PythonClient/requirements.txt pip3 install PythonClientCreate a new CARLA_ROOT environment variable pointing to CARLA's installation directory.

A simple CARLA settings file (

CarlaSettings.ini) is supplied with Coach, and is located in theenvironmentsdirectory. -

Starcraft:

Follow the instructions described in the PySC2 repository -

-

DeepMind Control Suite:

Follow the instructions described in the DeepMind Control Suite repository -

-

Note: To use Robosuite-based environments, please install Coach from the latest cloned repository. It is not yet available as part of the

rl_coachpackage on PyPI.Follow the instructions described in the robosuite documentation (see note on MuJoCo version below).

Note on MuJoCo version

OpenAI Gym supports MuJoCo only up to version 1.5 (and corresponding mujoco-py version 1.50.x.x). The Robosuite simulation framework, however, requires MuJoCo version 2.0 (and corresponding mujoco-py version 2.0.2.9, as of robosuite version 1.2). Therefore, if you wish to run both Gym-based MuJoCo environments and Robosuite environments, it's recommended to have a separate virtual environment for each.

Please note that all Gym-Based MuJoCo presets in Coach (rl_coach/presets/Mujoco_*.py) have been validated only with MuJoCo 1.5 (including the reported benchmark results).

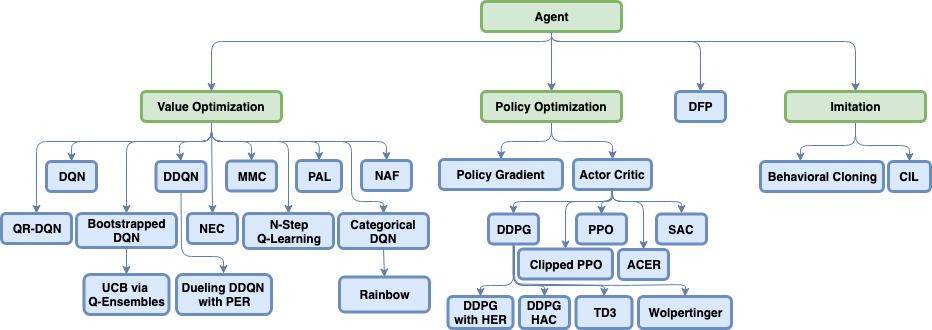

Supported Algorithms

Value Optimization Agents

- Deep Q Network (DQN) (code)

- Double Deep Q Network (DDQN) (code)

- Dueling Q Network

- Mixed Monte Carlo (MMC) (code)

- Persistent Advantage Learning (PAL) (code)

- Categorical Deep Q Network (C51) (code)

- Quantile Regression Deep Q Network (QR-DQN) (code)

- N-Step Q Learning | Multi Worker Single Node (code)

- Neural Episodic Control (NEC) (code)

- Normalized Advantage Functions (NAF) | Multi Worker Single Node (code)

- Rainbow (code)

Policy Optimization Agents

- Policy Gradients (PG) | Multi Worker Single Node (code)

- Asynchronous Advantage Actor-Critic (A3C) | Multi Worker Single Node (code)

- Deep Deterministic Policy Gradients (DDPG) | Multi Worker Single Node (code)

- Proximal Policy Optimization (PPO) (code)

- Clipped Proximal Policy Optimization (CPPO) | Multi Worker Single Node (code)

- Generalized Advantage Estimation (GAE) (code)

- Sample Efficient Actor-Critic with Experience Replay (ACER) | Multi Worker Single Node (code)

- Soft Actor-Critic (SAC) (code)

- Twin Delayed Deep Deterministic Policy Gradient (TD3) (code)

General Agents

- Direct Future Prediction (DFP) | Multi Worker Single Node (code)

Imitation Learning Agents

- Behavioral Cloning (BC) (code)

- Conditional Imitation Learning (code)

Hierarchical Reinforcement Learning Agents

Memory Types

Exploration Techniques

- E-Greedy (code)

- Boltzmann (code)

- Ornstein–Uhlenbeck process (code)

- Normal Noise (code)

- Truncated Normal Noise (code)

- Bootstrapped Deep Q Network (code)

- UCB Exploration via Q-Ensembles (UCB) (code)

- Noisy Networks for Exploration (code)

Citation

If you used Coach for your work, please use the following citation:

@misc{caspi_itai_2017_1134899,

author = {Caspi, Itai and

Leibovich, Gal and

Novik, Gal and

Endrawis, Shadi},

title = {Reinforcement Learning Coach},

month = dec,

year = 2017,

doi = {10.5281/zenodo.1134899},

url = {https://doi.org/10.5281/zenodo.1134899}

}

Contact

We'd be happy to get any questions or contributions through GitHub issues and PRs.

Please make sure to take a look here before filing an issue or proposing a PR.

The Coach development team can also be contacted over email

Disclaimer

Coach is released as a reference code for research purposes. It is not an official Intel product, and the level of quality and support may not be as expected from an official product. Additional algorithms and environments are planned to be added to the framework. Feedback and contributions from the open source and RL research communities are more than welcome.